Pipeline

Understanding Pipelines

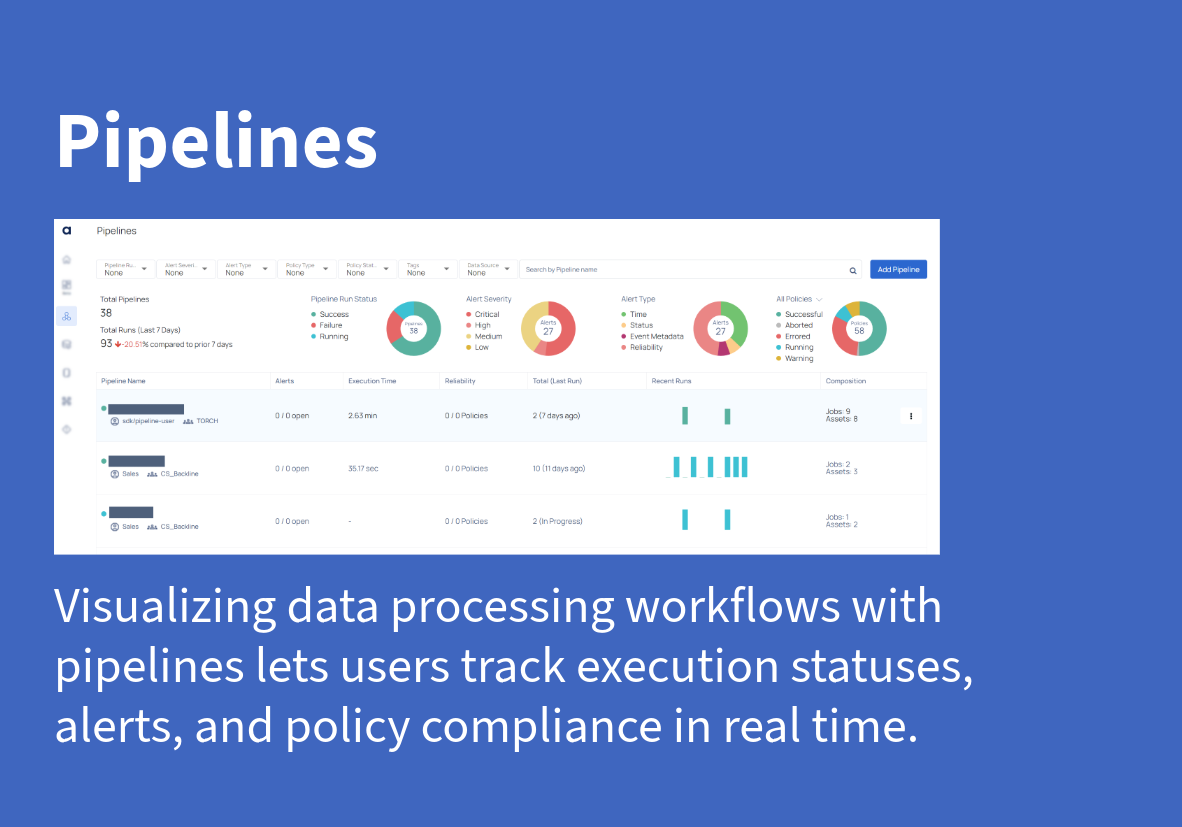

Pipelines in ADOC are a valuable tool for observing and managing data operations. They allow you to monitor the performance, reliability, and timeliness of your data processing activities, thereby ensuring that everything works well. The Pipeline feature allows you to track each stage of your data operations, receive alerts for abnormalities, and see the status of each operation at a glance.

Key Concepts in Pipelines

Pipeline: In ADOC Pipelines, a pipeline represents the entire data workflow, from data ingestion to processing and validation. It is the root entity that encompasses all the processes, data movements, and transformations.

Pipeline Run: A Pipeline Run in ADOC captures a specific instance or execution of the pipeline. It includes details such as metadata, performance metrics, and alerts. By analyzing Pipeline Runs, users can understand how their data flows through the system and make necessary adjustments.

Entities in Pipeline Runs

ADOC’s Pipeline Runs are composed of several entities that allow users to visualize and analyze their data operations effectively. The key entities in a pipeline run include:

| Entity | Description |

|---|---|

| Job Nodes | In the context of data pipelines, Job Nodes are responsible for executing specific tasks such as reading or writing data to databases, or performing data validation to assess the overall quality of data assets. In order to establish a comprehensive pipeline data lineage, you can connect Job Nodes with both input and output Asset Nodes. |

| Asset Nodes | Asset Nodes in a data pipeline serve as a representation of the data that is either consumed or produced by Job Nodes. By utilizing Data Reliability policies in Data Reliability, you can monitor and ensure the overall quality and reliability of data generated by the pipeline when compared to the data assets catalog. |

| Spans | Spans are used to furnish additional and more detailed information about a particular task or assignment. To manage a complex task, you can generate child spans and monitor them separately, attaching processors to spans to trigger events |

| Events | Events represent specific actions within a pipeline run. Each event is linked to a span and carries key-value information related to that action. Events help you track and visualize the state of a pipeline task in real time. |

Creating Pipelines in ADOC

Pipelines can be created using the ADOC UI or through SDK methods. Populating a pipeline with job details and related assets can only be done using SDK. To create a pipeline:

Step 1: Navigate to the Pipelines section in ADOC.

Step 2: Click on Add Pipeline.

Step 3: Configure your pipeline by adding nodes and connecting them to relevant assets.

Step 4: Save and run the pipeline to view its execution details.

For more detailed information, see How to create or add a Pipeline?

A pipeline can be added using the UI or using SDK. The populating of a pipeline with details of jobs and related assets can only be done using SDK.

Monitoring Pipelines

After the pipeline is created, you can monitor its execution by analyzing various metrics like:

- Execution Status: Whether the pipeline is running, successful, or has failed.

- Alerts: Notifications for any issues or deviations during pipeline execution.

- Policy Compliance: Adherence of pipeline data to set data reliability and quality policies.

With these monitoring capabilities, users can gain insight into their data processes and ensure smooth operations across their data workflows.