Title

Create new category

Edit page index title

Edit category

Edit link

Install Ozone 2

This documentation covers Ozone2 setup with ODP, managed by ambari as an mpack. The document also provides ozone configuration and functionality details to work with different hadoop-related services, s3, with or without security enabled in form of authentication (Kerberos) and/or authorization (Ranger).

Ozone2 Storage Elements

Ozone, being an object storage for Hadoop, consists of volumes, buckets, and keys:

- Volume: Volumes are similar to user accounts. Only administrators can create or delete volumes. Ozone creates

s3vvolume during initialization to support S3 Protocol. - Bucket: Buckets are similar to directories and reside in volume. It resembles Amazon S3 Buckets in terms of hierarchy and ACL. A bucket can contain any number of keys, but buckets cannot contain other buckets. OzoneManager supports two metadata bucket layout formats - Object Store (OBS) and File System Optimized (FSO). By default, Ozone sets all buckets layout to

FILE_SYSTEM_OPTIMIZEDbut buckets unders3vvolume are ofOBJECT_STORElayout. - Key: Keys are similar to files.

Environment Details

Ozone2 env directories

- PID - /var/run/ozone2

- log - /var/log/ozone2

- conf - /etc/ozone2/conf

- om conf - /etc/ozone2/conf/ozone.om

- lib - /usr/odp/{stack_version}/ozone2/share/ozone/lib

- ozone home - /usr/odp/{stack_version}/ozone2

Configurations

Metadata directories

Ozone2 Manager (OM)

| Configuration key | Default value | Purpose |

|---|---|---|

| ozone.om.db.dirs | /var/lib/ozone2/om/ozone-metadata | Ozone Manager Metadata directories. This should be specified as a single directory |

| ozone.om.ratis.storage.dir | /var/lib/ozone2/om/ratis | Used for storing OM's Ratis metadata like logs. If undefined, OM ratis storage dir will fallback to ozone.metadata.dirs. |

| ozone.om.ratis.snapshot.dir | /var/lib/ozone2/om-snapshots | Directory to store OM's snapshot related files like the ratisSnapshotIndex and DB checkpoint from leader OM. |

Storage Container Manager (SCM)

| Configuration key | Default value | Purpose |

|---|---|---|

| ozone.scm.db.dirs | /var/lib/ozone2/scm/data | Directory where the StorageContainerManager stores its metadata. This should be specified as a single directory. If the directory does not exist then the SCM will attempt to create it. |

| ozone.scm.ha.ratis.storage.dir | /var/lib/ozone2/scm/ratis | Storage directory used by SCM to write Ratis logs. |

| hdds.metadata.dir | /var/lib/ozone2/hdds-metadata | Absolute path to HDDS metadata dir. Part of SCM balancer properties. |

Ozone2 Datanode (DN)

| Configuration key | Default value | Purpose |

|---|---|---|

| hdds.datanode.dir | /data/ozone2/datanode/data | Determines where on the local filesystem HDDS data will be stored. Defaults to dfs.datanode.data.dir if not specified. |

| ozone.metadata.dirs | /var/lib/ozone2/services-metadata/dn-metadata | Fallback location for SCM, OM, Recon and DataNodes to store their metadata. In ODP ozone, used only for datanode metadata. |

| dfs.container.ratis.datanode.storage.dir | /var/lib/ozone2/datanode/ratis/data | Ozone Datanode Ratis Data Directory, to store metadata like logs |

| ozone.scm.datanode.id.dir | /var/lib/ozone2/datanode | The path that datanodes will use to store the datanode ID. |

Recon Service

| Configuration key | Default value | Purpose |

|---|---|---|

| ozone.recon.db.dir | /var/lib/ozone2/recon/data | Ozone RECON UI data directory. This should be specified as a single directory. If the directory does not exist then the Recon will attempt to create it. |

| ozone.recon.om.db.dir | /var/lib/ozone2/recon/om/data | Directory where the Recon Server stores its OM snapshot DB. This should be specified as a single directory. If the directory does not exist then the Recon will attempt to create it. |

| ozone.recon.scm.db.dirs | /var/lib/ozone2/recon/scm/data | Directory where the Recon Server stores its SCM snapshot DB. This should be specified as a single directory. If the directory does not exist then the Recon will attempt to create it. |

Ozone2 Service Endpoints

Ozone2 Manager (OM)

| Default Port | Ozone2 Port | Configuration key | Endpoint Protocol | Purpose |

|---|---|---|---|---|

| 9862 | ozone.om.rpc.port | Hadoop RPC | RPC endpoint for clients and applications | |

| 9872 | ozone.om.ratis-port | GRPC | RPC endpoint for OM HA instances to form a RAFT consensus ring | |

| 9874 | ozone.om.http-port | HTTP | Ozone Manager web UI | |

| 9875 | ozone.om.https-port | HTTPS | Ozone Manager web UI | |

| 9872 | ozone.om.ratis.port |

Storage Container Manager (SCM)

| Default Port | Configuration key | Endpoint Protocol | Purpose |

|---|---|---|---|

| 9861 | ozone.scm.datanode.port | Hadoop RPC | Port used by DataNodes to communicate with the SCM |

| 9863 | ozone.scm.block.client.port | Hadoop RPC | Port used by the Ozone Manager to communicate with the SCM for block related operations |

| 9860 | ozone.scm.client.port | Hadoop RPC | Port used by Ozone Manager and other clients to communicate with the SCM for container operations |

| 9876 | ozone.scm.http-port | HTTP | SCM Web UI |

| 9877 | ozone.scm.https-port | HTTPS | SCM Web UI |

| 9895 | ozone.scm.grpc.port | ||

| 9894 | ozone.scm.ratis.port | ||

| 9961 | ozone.scm.security.service.port |

Ozone2 Datanode (DN)

| Default Port | Configuration key | Endpoint Protocol | Purpose |

|---|---|---|---|

| 9882 | ozone.datanode.http-address | HTTP | DataNode Web UI |

| 9883 | ozone.datanode.https-address | HTTPS | DataNode Web UI |

| 9858 | dfs.container.ratis.ipc | GRPC | RAFT server endpoint that is used by clients and other DataNodes to replicate RAFT transactions and write data. |

| 9859 | dfs.container.ipc | GRPC | Endpoint that is used by clients and other DataNodes to read block data. |

| 9856 | dfs.container.ratis.server.port | ||

| 9857 | dfs.container.ratis.admin.port | ||

| 19864 | hdds.datanode.client.address |

S3 Gateaway (S3G)

| Default Port | Configuration key | Endpoint Protocol | Purpose |

|---|---|---|---|

| 9878 | ozone.s3g.http-port | HTTP | S3 API REST Endpoint |

| 9879 | ozone.s3g.https-port | HTTPS | S3 API REST Endpoint |

| 0.0.0.0:19878 | ozone.s3g.webadmin.http-address | HTTP | The address and port where Ozone S3Gateway serves web content (like admin and jmx endpoints) |

| 0.0.0.0:19879 | ozone.s3g.webadmin.https-address | HTTPS | The address and port where Ozone S3Gateway serves web content (like admin and jmx endpoints) |

Recon Service

| Default Port | Configuration key | Endpoint Protocol | Purpose |

|---|---|---|---|

| 9891 | ozone.recon.rpc-port | Hadoop RPC | Port used by Datanodes to communicate with Recon Server (reporting) |

| 9898 | ozone.recon.http-port | HTTP | Recon service Web UI and REST API |

| 9899 | ozone.recon.https-port | HTTPS | Recon service Web UI and REST API |

Ozone2 Installation

Ozone2 integration with ODP is available as Ambari Mpack. Download the ozone2-mpack tar on your ambari-server node and install mpack.

xxxxxxxxxx# Install mpackambari-server install-mpack --mpack=ambari-mpacks-ozone2.tar.gz --verbose # Restart ambariambari-server restartThis Mpack is designed to support Ozone2 service in HA and non-HA installations only on fresh installations.

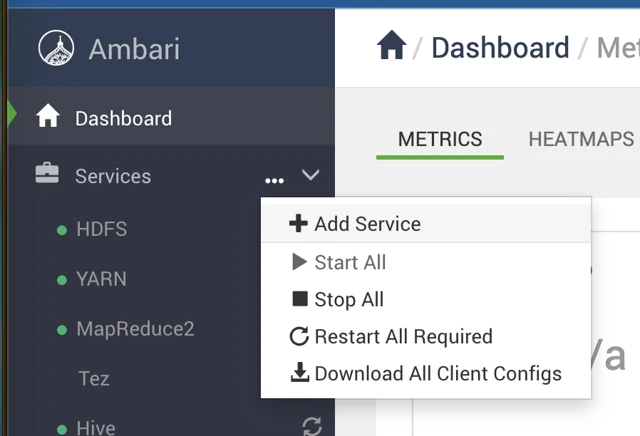

Navigate to the Ambari UI.

- Ambari UI > Services > Add Service

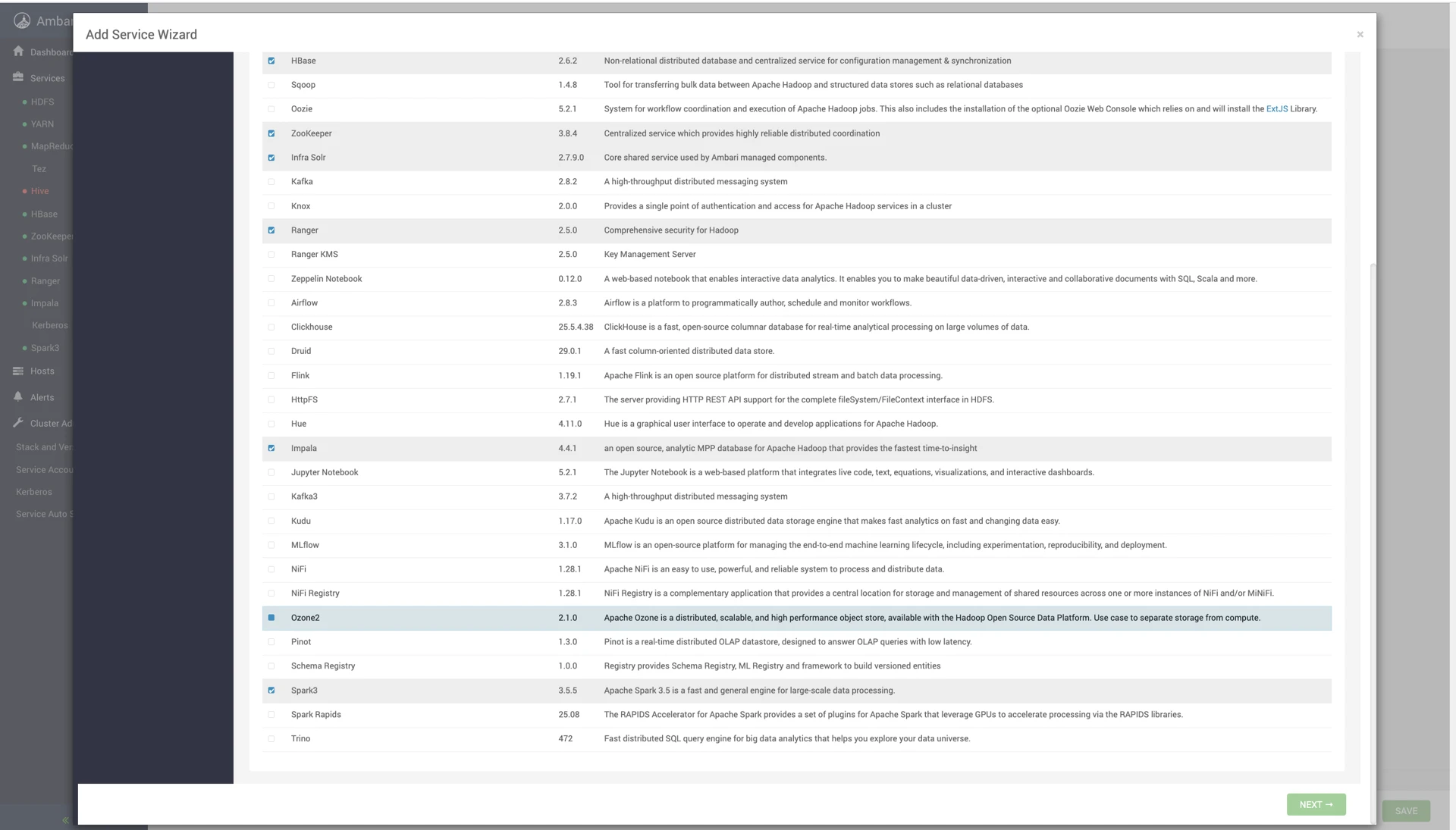

- Service Wizard > select Ozone2

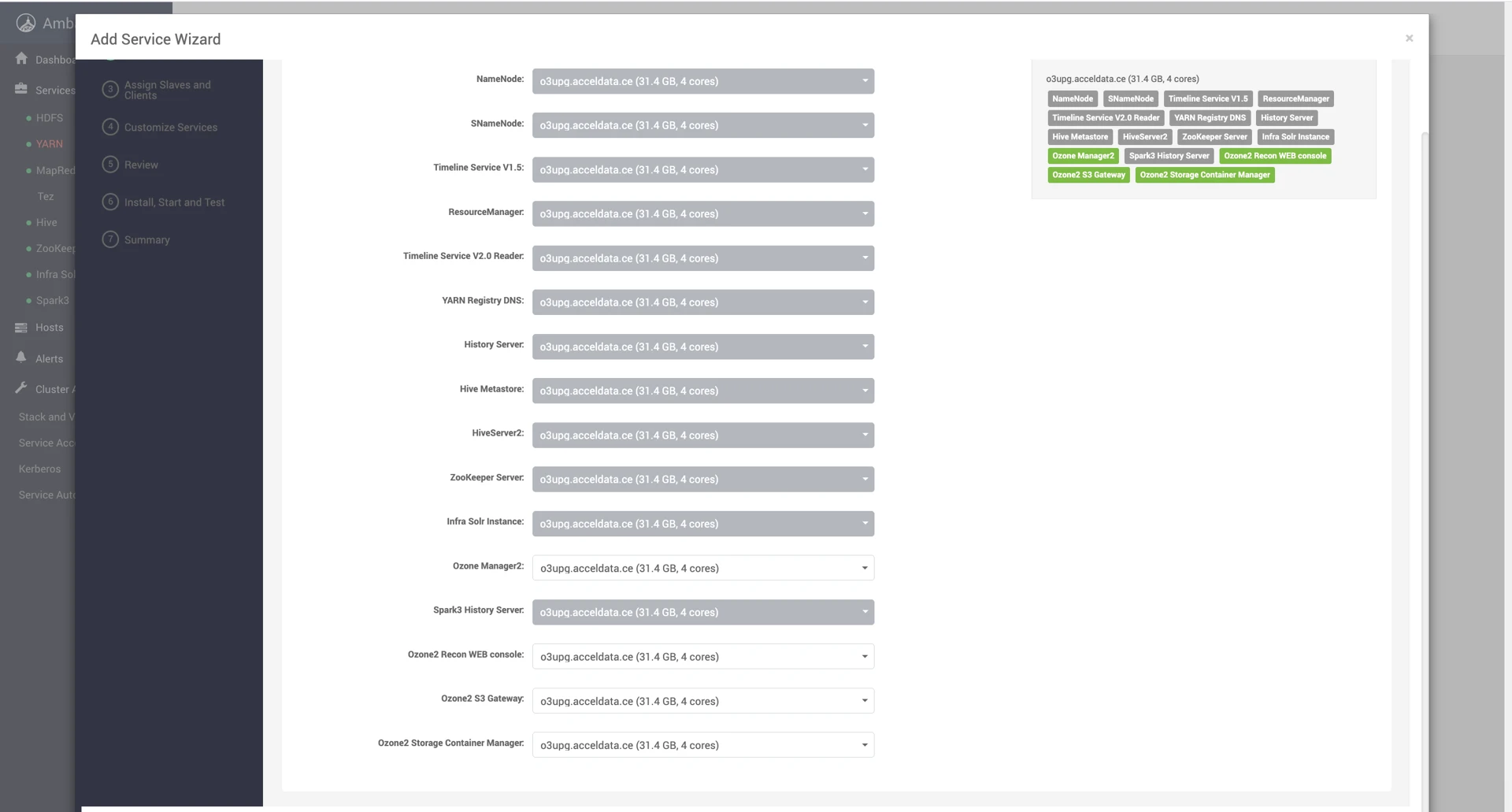

- Click Next and configure Ozone2 component nodes and properties as per use case (Choose 3 nodes for OM, SCM and Datanode to maintain HA).

For a secure cluster with Kerberos, ozone2 enables Kerberos authentication by default at installation time.

To enable SSL on Ozone2, configure properties as shown Ozone2 Installation.

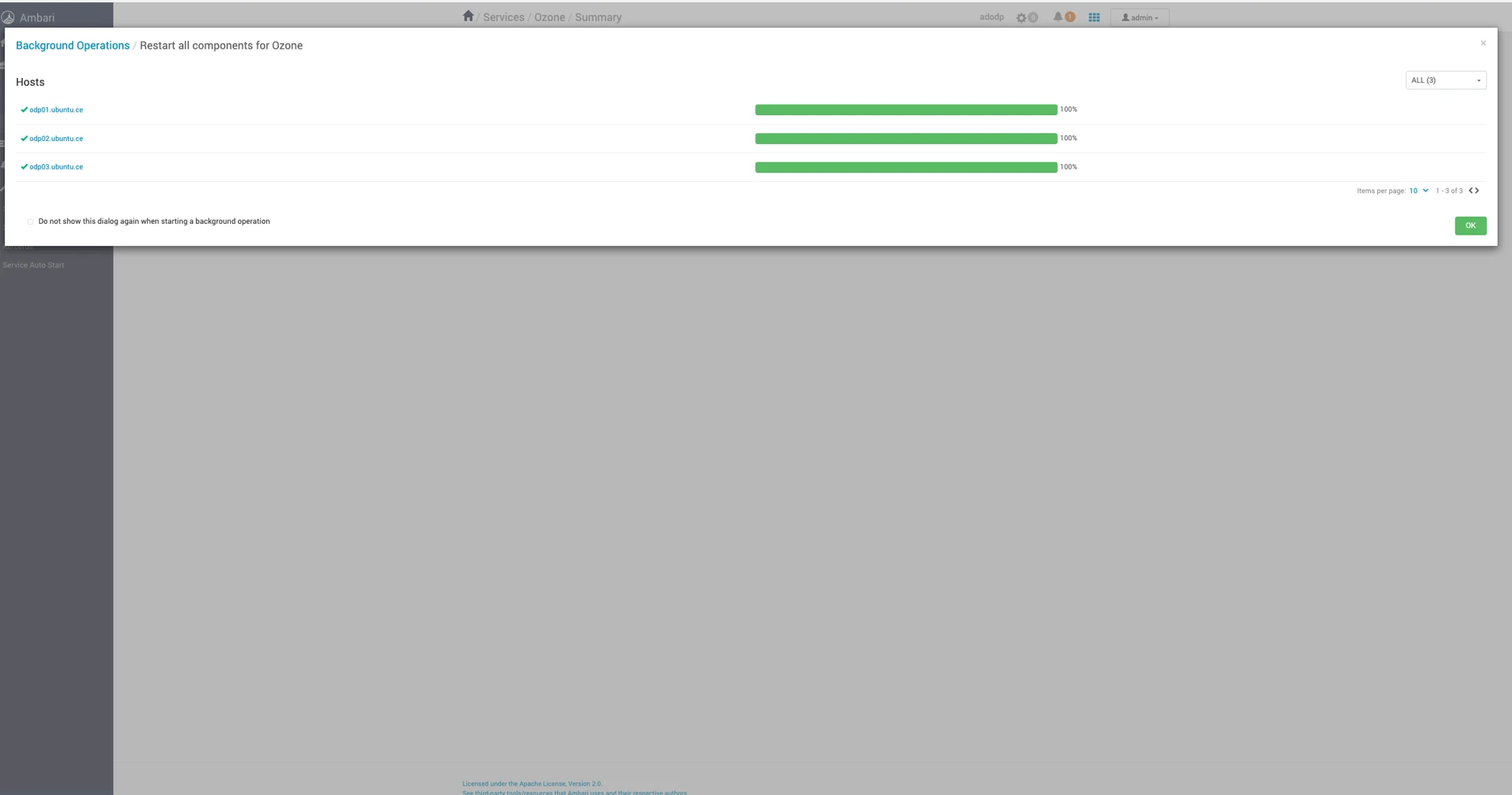

- Service installed successfully. Click Ok.

SSL Enablement

Update the following properties as per your SSL configurations for respective host and component.

Enable SSL on all components of ozone2 to implement fully functional SSL-enabled ozone2.

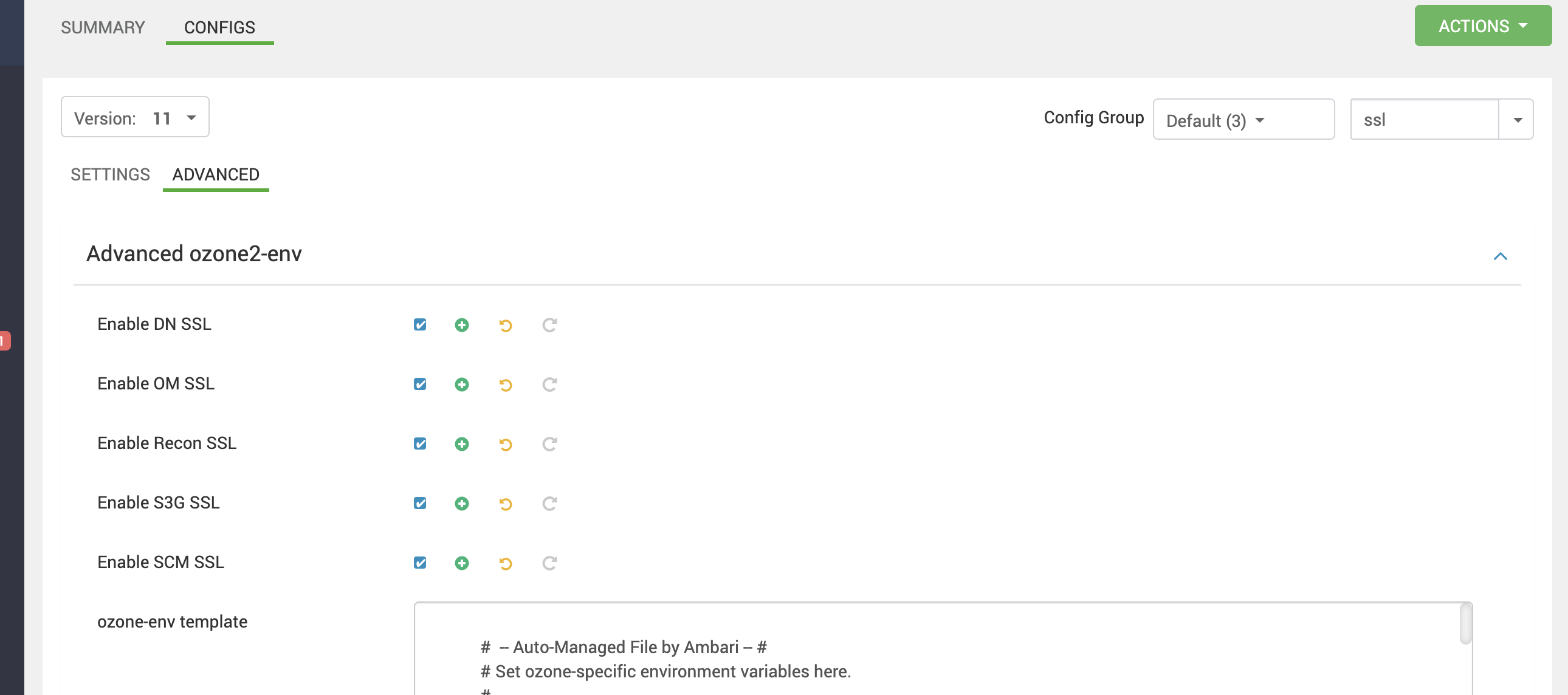

Ambari UI > Ozone2 > Configurations > Advanced ozone2-env: Check the following properties

To ozone2-site, add below configs:

| Property | Value |

|---|---|

| ozone.http.policy | HTTPS_ONLY |

| ozone.https.client.keystore.resource | ssl-client.xml |

| ozone.https.server.keystore.resource | ssl-server.xml |

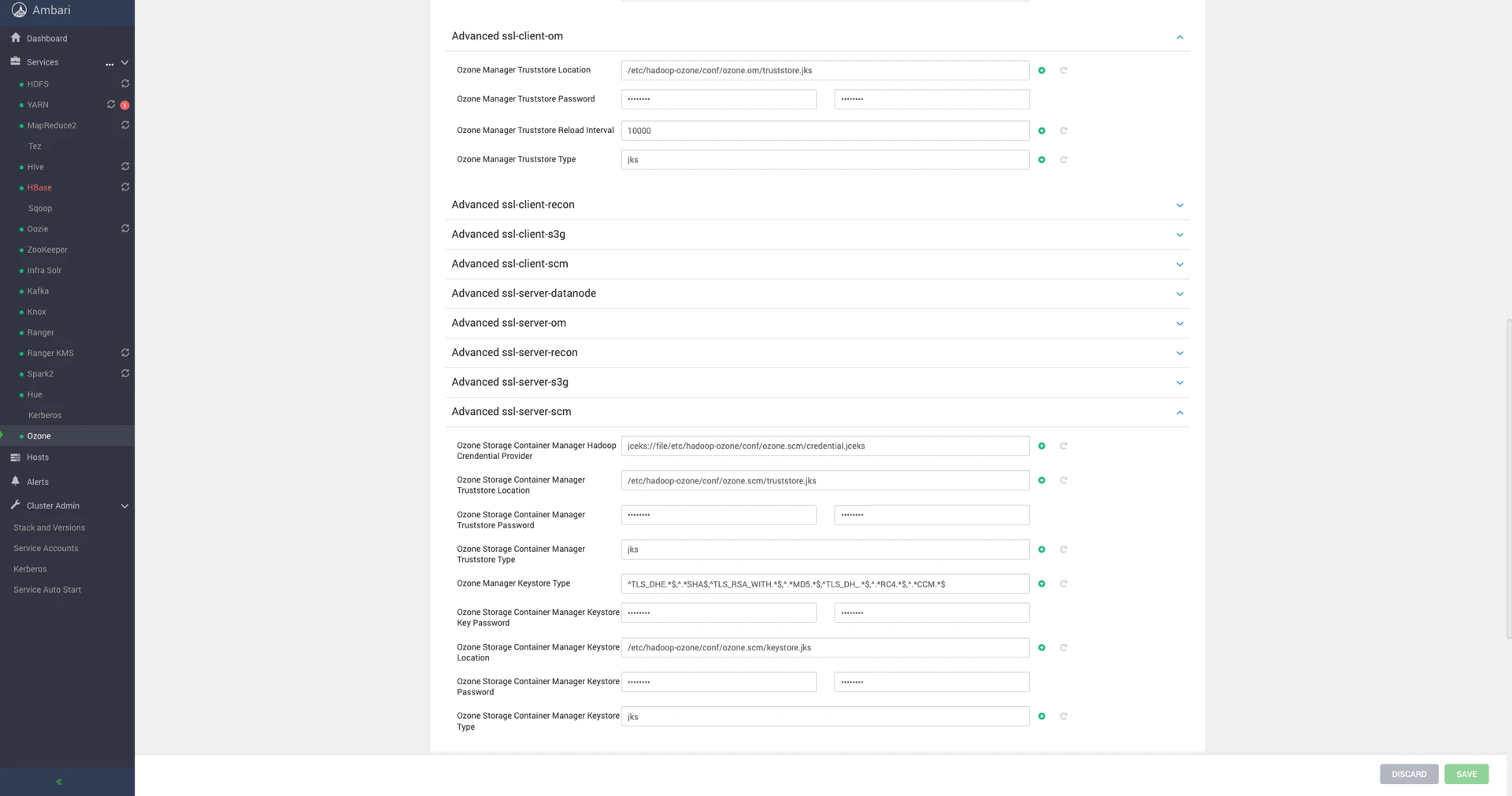

Then, configure trustore and keystore in Advanced ozone2-ssl-client, Advanced ssl-client-datanode, Advanced ssl-client-om, Advanced ssl-client-recon, Advanced ssl-client-s3g, Advanced ssl-client-scm, Advanced ssl-server-datanode, Advanced ssl-server-om, Advanced ssl-server-recon, Advanced ssl-server-s3g, Advanced ssl-server-scm.

Kerberos Configuration

Ozone2 service principal and keytab for service and spengo for UI, will be configured with Ambari automation. If you have an SPNEGO enabled Ozone2 cluster and want to disable it for all Ozone2 components, update the following properties as shown :

| Property | Value |

|---|---|

| ozone.security.http.kerberos.enabled | false |

| ozone.http.filter.initializers |

This mpack supports Ozone2 with kerberos security only on fresh installation of ozone2 in a kerberized ODP cluster, considering development limitations.

Ranger Configuration

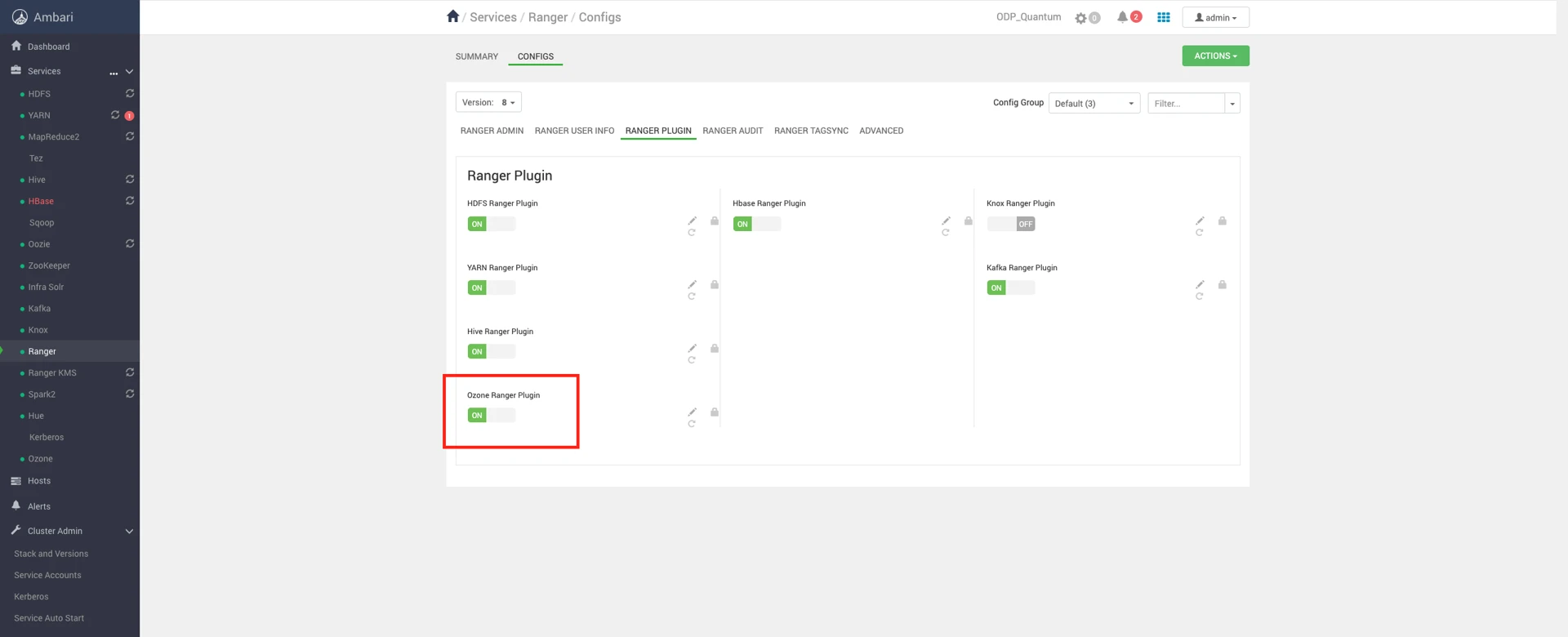

Enable/disable Ranger authorization from Ambari UI > Ranger > Configs > Ozone Ranger Plugin and restart service to implement changes.

Before enabling plugin, verify/add ozone-filesystem-hadoop3-2.1.0.3.3.6.2-104.jar to given path

cp /usr/odp/current/ozone2-client/share/ozone/lib/ozone-filesystem-hadoop3-1.4.0.* /usr/odp/{stack_version}/ranger-admin/ews/webapp/WEB-INF/classes/ranger-plugins/ozone/cp /usr/odp/current/ozone2-client/share/ozone/lib/bcprov-jdk15on-1.67.jar /usr/odp/(stack_version}/ranger-admin/ews/webapp/WEB-INF/classes/ranger-plugins/ozone/Configure a Resource-based Service: Ozone

How to add Ozone2 service.

Procedure

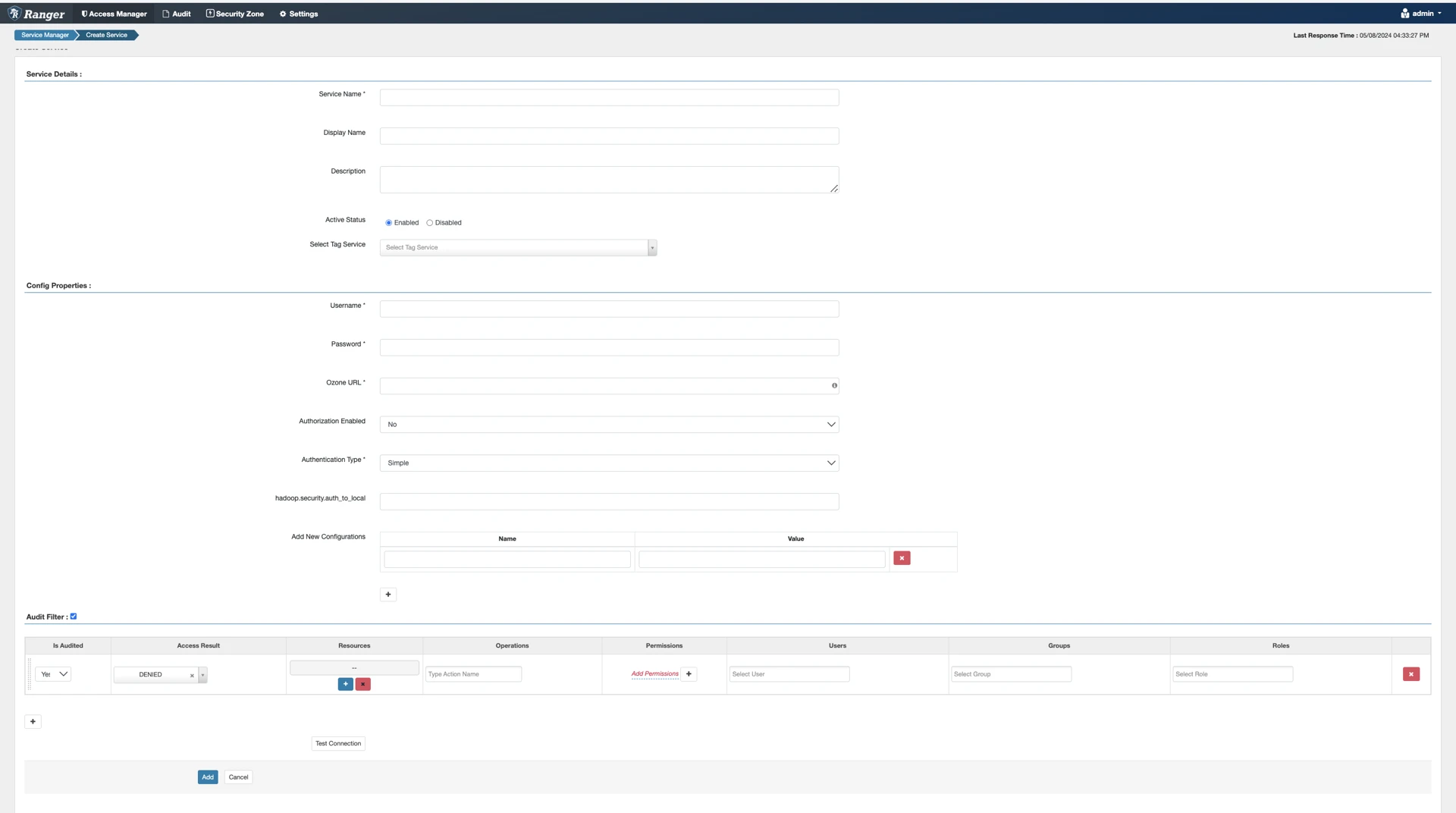

- On the Service Manager page, click the Add icon () next to Ozone.

The Create Service page appears.

- Enter the following information on the Create Service page:

Table 3: Service Details

| Field name | Description |

|---|---|

| Service Name | The name of the service; required when configuring agents. |

| Description | A description of the service. |

| Active Status | Enabled or Disabled. |

| Select Tag Service | Select a tag-based service to apply the service and its tag-based policies to Ozone |

Table 4: Configuration Properties

| Field name | Description |

|---|---|

| Username | The end system username that can be used for connection. |

| Password | The password for the username entered above. |

| Ozone2 URL | Ozone2 URL , <host><port> |

| Authorization Enabled | Authorization involves restricting access to resources. If enabled, user need authorization credentials. |

| Authentication Type | The type of authorization in use, as noted in the hadoop configuration file core-site.xml; either simple or Kerberos. (Required only if authorization is enabled). This field was formerly named hadoop.security.authorization. hadoop.security.auth_to_ local |

| hadoop.security.auth_to_ local | Maps the login credential to a username with Hadoop; use the value noted in the hadoop configuration file, core site.xml |

| Common Name For Certificate | The name of the certificate. This field is interchangeably named Common Name For Certificate and Ranger Plugin SSL CName in Create Service pages. |

| Add New Configurations | Add any other new configuration(s). |

- Click Test Connection.

- Click Add.

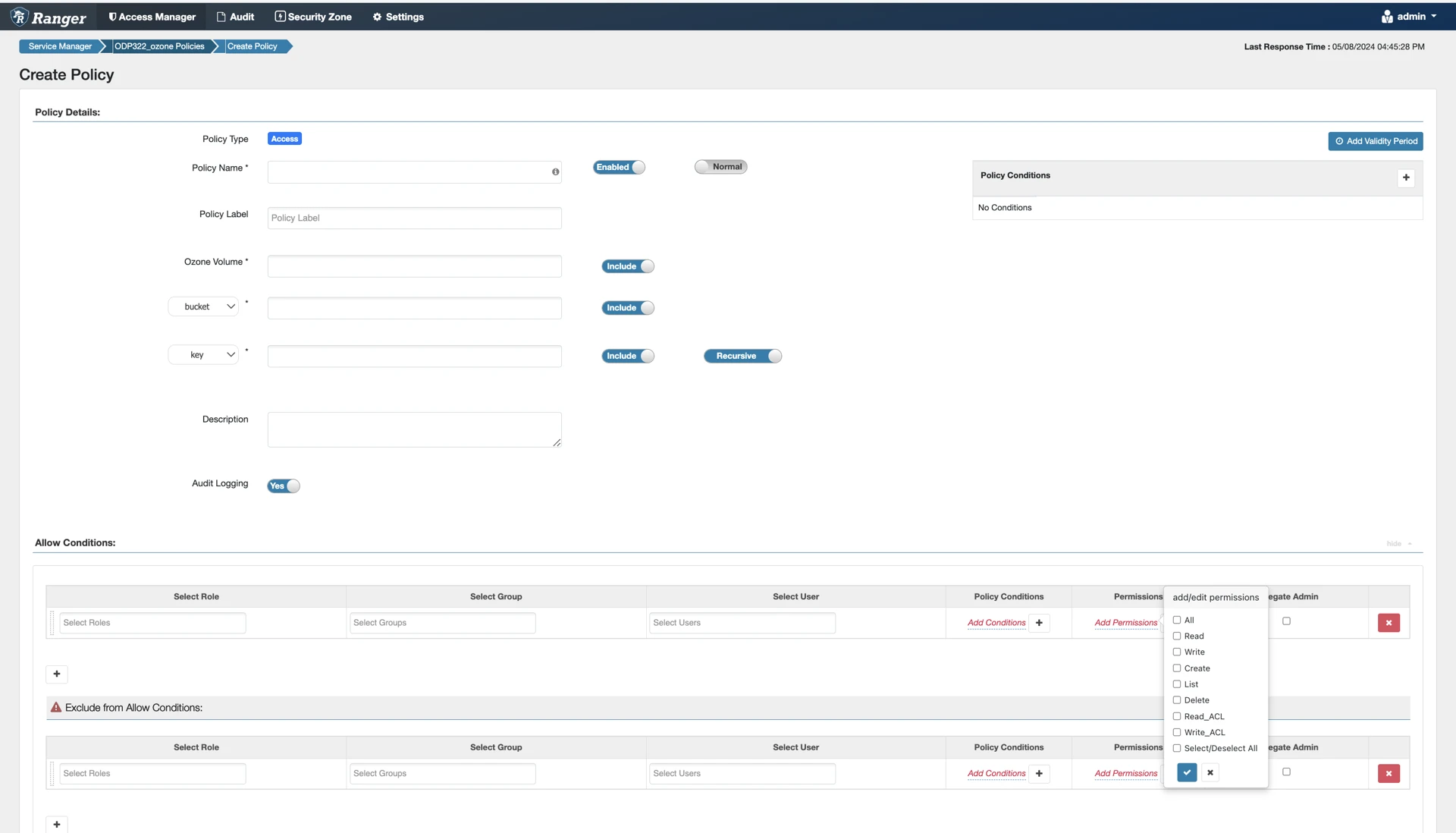

Configure a Resource-based Policy: Ozone

How to add a new policy to an existing Ozone service.

About this task

Through configuration, Apache Ranger enables both Ranger policies and Ozone permissions to be checked for a user request. When the Ozone Manager receives a user request, the Ranger plugin checks for policies set through the Ranger Service Manager. If there are no policies, the Ranger plugin checks for permissions set in Ozone, as per Ozone ACL.

We recommend that permissions be created at the Ranger Service Manager, and to have restrictive permissions at the Ozone level.

Procedure

- On the Service Manager page, select an existing Ozone service. The List of Policies page appears.

- Click Add New Policy. The Create Policy page appears.

- Complete the Create Policy page as follows:

Table 25: Policy Details

| Label | Description |

|---|---|

| Policy Name | Enter an appropriate policy name. This name cannot be duplicated across the system. This field is mandatory. |

| normal/override | Enables you to specify an override policy. When override is selected, the access permissions in the policy override the access permissions in existing policies. This feature can be used with Add Validity Period to create temporary access policies that override existing policies. |

| Volume | Define the volume for the policy. Type in the applicable volume name. The autocomplete feature displays available volume based on the entered text. |

| Bucket/none | Define the bucket for the policy. Type in the applicable bucket name. The autocomplete feature displays available buckets based on the entered text. Set bucket to none to provide volume level permissions. |

| Key/none | Define the key for the policy. Type in the applicable key name. The autocomplete feature displays available keys based on the entered text.Set key to none to provide bucket level permissions. The default recursive setting specifies that the resource path is recursive; you can also specify a non-recursive path. |

| Description | (Optional) Describe the purpose of the policy. |

| Audit Logging | Specify whether this policy is audited. (De-select to disable auditing). [Ranger Audit is not supported in Ozone] |

| Policy Label | Specify a label for this policy. You can search reports and filter policies based on these labels. |

| Add Validity Period | Specify a start and end time for the policy. |

Table 26: Allow Conditions

| Label | Description |

|---|---|

| Select Group | Specify the groups to which this policy applies. To designate a group as an Administrator, select the Delegate Admin check box. Administrators can edit or delete the policy, and can also create child policies based on the original policy. The public group contains all users, so granting access to the public group grants access to all users. |

| Select User | Specify the users to which this policy applies. To designate a user as an Administrator, select the Delegate Admin check box. Administrators can edit or delete the policy, and can also create child policies based on the original policy. |

| Permissions | Add or edit permissions: Read, Write, Create, Admin, Select/Deselect All. |

| Delegate Admin | You can use Delegate Admin to assign administrator privileges to the users or groups specified in the policy. Administrators can edit or delete the policy, and can also create child policies based on the original policy. |

- You can use the Plus (+) symbol to add additional conditions. Conditions are evaluated in the order listed in the policy. The condition at the top of the list is applied first, then the second, then the third, and so on.

- Click Add.

The Ranger permissions corresponding to the Ozone2 operations are as follows:

| operation&permission | Volume permission | Bucket permission | Key permission |

|---|---|---|---|

| Create volume | CREATE | ||

| List volume | LIST | ||

| Get volume Info | READ | ||

| Delete volume | DELETE | ||

| Create bucket | READ | CREATE | |

| List bucket | LIST, READ | ||

| Get bucket info | READ | READ | |

| Delete bucket | READ | DELETE | |

| List key | READ | READ, LIST | |

| Write key | READ | READ | CREATE, WRITE |

| Read key | READ | READ | READ |

Ozone2 Command Line Interface

This storage Ozone2 shell is the primary interface to interact with Ozone2 from the command line.

Volume operations

Ozone ACL limits volume creation permissions to ozone admins only.

xxxxxxxxxx# CREATE VOLUME# authenticate user with kinit, in case of kerberized cluster$ ozone2 --config /etc/ozone2/conf/ozone.om sh volume create testvolOR$ ozone2 --config /etc/ozone2/conf/ozone.om sh volume create o3://${om_service_id}/testvol# VOLUME INFO$ ozone2 --config /etc/ozone2/conf/ozone.om sh volume info testvol24/05/02 07:41:31 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable24/05/02 07:41:31 INFO client.ClientTrustManager: Loading certificates for client.{ "metadata" : { }, "name" : "testvol", "admin" : "ambari-qa", "owner" : "ambari-qa", "quotaInBytes" : -1, "quotaInNamespace" : -1, "usedNamespace" : 0, "creationTime" : "2024-05-02T05:10:09.564Z", "modificationTime" : "2024-05-02T05:10:09.564Z", "acls" : [ { "type" : "USER", "name" : "ambari-qa", "aclScope" : "ACCESS", "aclList" : [ "ALL" ] }, { "type" : "GROUP", "name" : "ambari-qa", "aclScope" : "ACCESS", "aclList" : [ "ALL" ] }, { "type" : "GROUP", "name" : "hadoop", "aclScope" : "ACCESS", "aclList" : [ "ALL" ] } ], "refCount" : 0}xxxxxxxxxx# LIST VOLUME$ ozone2 --config /etc/ozone2/conf/ozone.om sh volume list --all# DELETE VOLUME$ ozone2 --config /etc/ozone2/conf/ozone.om sh volume delete testvol24/05/02 07:44:15 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable24/05/02 07:44:15 INFO client.ClientTrustManager: Loading certificates for client.Volume testvol is deletedIf the volume contains any buckets or keys, we can delete the volume recursively. This will delete all keys and buckets within the volume, and then delete the volume itself. After running this command there is no way to recover deleted contents.

xxxxxxxxxx$ ozone2 sh volume delete -r testvolThis command will delete volume recursively.There is no recovery option after using this command, and no trash for FSO buckets.Delay is expected running this command.Enter 'yes' to proceed': yesVolume testvol is deletedBucket operation

xxxxxxxxxx# CREATE BUCKET# authenticate user with kinit before accessing below commands, in case of kerberized cluster$ ozone2 --config /etc/ozone2/conf/ozone.om sh bucket create /testvol/bucketOR$ ozone2 --config /etc/ozone2/conf/ozone.om sh bucket create o3://${om_service_id}/testvol/bucketBy default, all buckets are created to support file system (FILE_SYSTEM_OPTIMIZED).

If the bucket contains any keys, we can delete the bucket recursively. This will delete all the keys within the bucket, and then the bucket itself. After running this command there is no way to recover deleted contents.

Key operation

If the key is in an FSO bucket it will be moved to the trash when deleted. Location of trash folder /<volume>/<bucket>/.Trash/<user>. If the key is in an OBS bucket it will be permanently deleted.

Working with Ozone File System

ODP currently does not support Ozone2 as the default file system. Yet ODP Ozone2 is configured to work independently of HDFS.

Prerequisites

To enable ofs support with applications, configure applications to use necessary jars and ozone2-site.xml.

- Add the ozone-filesystem-hadoop3.jar to the application classpath.

- Add the following configs to core-site.xml

- Add following configs from ozone2-site.xml to

hdfs-site.xmlon Ambari.

| ozone.om.service.ids | omservice |

| ozone.om.address.omservice.om0 | <om-node1-host>:9862 |

| ozone.om.address.omservice.om1 | <om-node2-host>:9862 |

| ozone.om.address.omservice.om2 | <om-node3-host>:9862 |

| ozone.om.nodes.omservice | om0,om1,om2 |

| ozone.om.kerberos.keytab.file | /etc/security/keytabs/ozone.om.service.keytab |

| ozone.om.kerberos.principal | om/_HOST@ADSRE.COM |

- Restart hadoop services

HDFS with OFS

Access hdfs dfs operations with ozone2 storage :

Here are some examples :

- List files

- Create directory

- Upload file

- Reading file

retry.RetryInvocationHandler: com.google.protobuf.ServiceException: INFO logs may be ignored as client hits all OM hosts one by one to identify leader OM.

YARN with Ozone2

Yarn can be used to run jobs with jobs accessing data from or writing into ozone file system.

- Add ozone-filesystem-hadoop3-1.4.0_.jar to _mapreduce.application.classpath* in mapred-site.xml .

- If ranger authorization is enabled, provide necessary permissions to ofs buckets, hdfs path, yarn queues, to perform necessary operations as per job requirements.

- Perform respective user kerberos authentication in case of secure cluster.

- submit job

Here is a sample job, doing wordcount on data from file in ofs, and storing the output file with wordcount result in ofs.

Job failing with :

INFO mapreduce.Job: Task Id : task-id , Status : FAILEDError: java.io.IOException: Cannot resolve OM host omservice in the URI``

Configure mapreduce job to use ozone-site.xml. Alternatively, you can pass configs during runtime:

HIVE with Ozone

Although HIVE installation and operations use HDFS as default file system, ozone can be configured to be parallel file system for HIVE operations.

Configure Hive to work with Ozone :

- Navigate to the Ambari UI > Hive > Configs > Advanced Hive-env and add

- Restart Hive and Tez.

- If Ranger authorization is enabled, grant the necessary permissions to OFS (Ozone File System) buckets, HDFS paths, and Hive URL to allow the required operations as per the job requirements.

- Authenticate users with Kerberos credentials when operating in a secure cluster.

If queries are failing with below error when run queries as end user is enabled in hive org.apache.hadoop.security.authorize.AuthorizationException: User: hive is not allowed to impersonate ... https://issues.apache.org/jira/browse/HDDS-664

Ambari UI > Ozone > Configurations > Custom Core-site: add the following configs and restart services :

| hadoop.proxyuser.hive.groups | * |

| hadoop.proxyuser.hive.hosts | * |

| hadoop.proxyuser.hive.users | * |

Store tables in OFS

To create tables in OFS add LOCATION '<OFS_URI> to CREATE TABLE command. This will make Hive tables reside at the specified location in ozone. All data changes her after will be in effect at table in given OFS_URI.

Here are sample hive operations with Hive accessing OFS :

- Connect to Beeline

- Create new table in ofs

- Validate new table

- Add values to table

- Validate newly added values

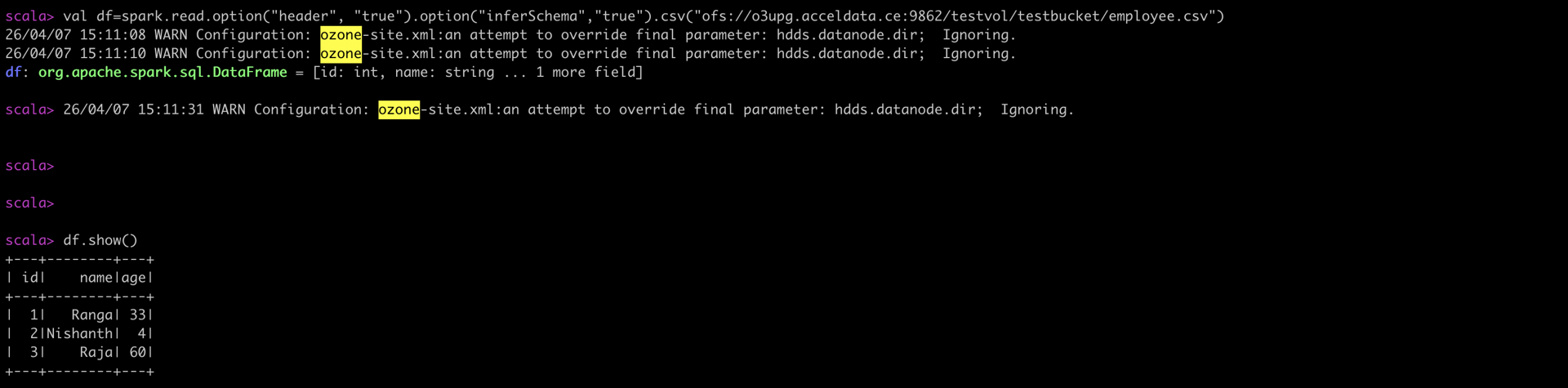

SPARK with Ozone2

Although Ozone2 can work independently, current Ambari does not support Spark installation without HDFS.

Apache Spark can access data from Apache Ozone2 and perform tasks. To access Apache Ozone2, configure spark :

- Configure spark shell to use

/usr/odp/current/ozone2-client/share/ozone/lib/ozone-filesystem-hadoop3-client-2.1.0.3.3.6.2-104.jar.

Accessing Apache Ozone data in Apache Spark3

- Creating sample data to be read by Spark3

- Upload the employee.csv file to Ozone2

- Provide necessary permissions under ozone policies, for spark user to access respective bucket and file, if ranger authorization is enabled.

- Allow spark user to submit yarn applications.

- Launch spark-shell

- Accessing csv file content in ozone as spark df

Custom PySpark Job

To run spark job using ofs use following command:

For a secure cluster, add --keytab <keytab> --principal <principal> values to above command.

Here is a sample custom job that functions to access Ozone data with Apache Spark and write output to Ozone.

- Custom Pyspark application using ofs to access data and write output

- Uploading sample input file to ofs

- Provide necessary permissions to spark user to access respective bucket and key, in case of ranger authorization enabled.

- Running PySpark app in secure cluster

- Validate output in ofs

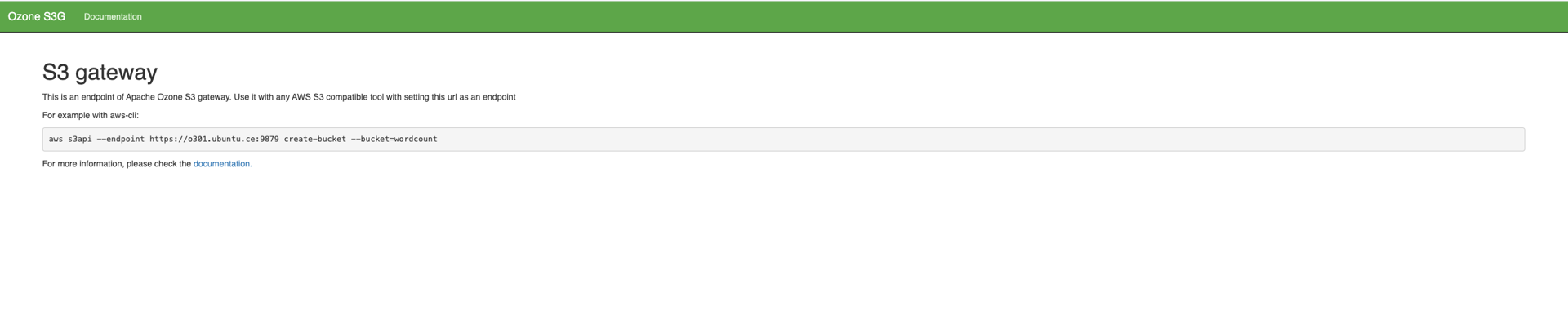

Using Ozone2 S3 Gateway

Ozone provides S3 compatible REST interface through Ozone2 S3 Gateway to use the object store data with any S3 compatible tools. Although Ozone2 S3 Gateway is an additional to the regular Ozone2 components, in Acceldata’s ODP mpack Ozone2 S3 Gateway is installed and started as part of Ozone2 service. S3 buckets are stored under the /s3v volume.

Prerequisites

To use an S3 endpoint, configuring access key and secret for aws compatible tools is required. Here, taking example of awscli.

- Generate Access Key and Secret for aws : If security is not enabled, you can use any AWS_ACCESS_KEY_ID and AWS_SECRET_ACCESS_KEY. If security is enabled, you can get the key and the secret with the

ozone2 s3 getsecretcommand (Kerberos based authentication is required)

- Export these credentials on your S3 endpoint. Here, I’m updating the credentials as a new profile.

Alternatively, you may create a new profile with ozone related credentials and use ozone profile to run

S3 utility tasks on awscli.

- Verify your S3 endpoint from S3 Gateway UI.

Starting in Ozone 2.1.0, the secret will be shown only once when generated with getsecret. If the secret is lost, the user would have to revokesecret first before regenerating a new secret with getsecret.

Ozone2 S3 Gateway to work with AWS CLI

Ozone S3 Gateway supports various bucket and object operations that the Amazon S3 API provides. Amazon Web Services (AWS) command-line interface (CLI) is one such utility tool, used to interact with S3 Gateway and work with various Ozone storage elements.

Examples of using AWS CLI for Ozone S3 Gateway :

- Create new bucket

- Upload key to new bucket

- Confirm key upload

- Verify file content through ozone

SSL enabled Ozone2 S3 Gateway to work with AWS CLI

In case of SSL-enabled Ozone, S3 Gateway has https endpoint. Python SSL supported with AWS CLI honors certificates in the PEM format. Hence, convert your CA certificate to PEM if using any other format, on all required client nodes.

Pass the certificate in PEM file format to the aws s3api commands to perform S3 utility tasks. For example :

- Create new bucket

- Upload key to new bucket

- Confirm key upload

- Verify file content through ozone

Revoke access to generated aws credentials

Revoke access to AWS_ACCESS_KEY_ID and AWS_SECRET_ACCESS_KEY once your usecase is completed.

Known Issues / Limitations

- Only a single Ozone installation is supported per cluster.

- Currently, Ozone Manager Java Heap size is set to half of the machine RAM. Adjust the Java heap size as required at the time of installation.

- Ozone mpack supports secure ozone only on fresh ozone installations on kerberized clusters.

- In the new Ozone2 mpack, the S3 Gateway (S3G) web utility has been split across two separate ports, which may result in warning alerts being displayed. https://issues.apache.org/jira/browse/HDDS-7307. The new ozone S3G port for jmx operations is 19878 (default), while the S3 Gateway endpoint for static content is 9878 (default).

- HA and non-HA ozone installation is only applicable on fresh installation. Non-HA to HA migration is yet to be certified.